Fractals and Benoit

Mandelbrot

A Computer Science Discovery

often considered kin to Prime Numbers.

Fractals are a very important discovery of an astute

mathematician at IBM, one Benoit Mandelbrot.

In 1977, Mandelbrot

found that certain mathematical formula can define a

shape which when displayed, appears to contain

smaller representations of itself similar in nature

(geometry and shape) within itself. His analysis of

why this occurred lead to a

series of calculations that allow images to be

created that have virtually infinite self-definition, the more

you zoom in on them, the more detail is exposed

bearing strong

similarity

to the image that contains them.

similarity

to the image that contains them.

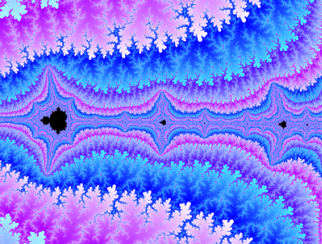

Fractals themselves, are visually

stimulating to look at. The picture to the right is

called a "Mandelbrot".

However, their ability to carry or modify data is

extremely useful

to computer science in encoding, decoding, and

ciphers. For example, by using

Fractal Geometry Mathematics, one can reduce a

fairly complex image shape down to a very small,

compressed object which if later is decompressed,

sufficiently resembles the original shape, that it

is recognizable by the human mind with considerable

accuracy.

If that process is

done to a series of still images in a video stream,

when recomposed and played back in the same

sequences as the original video, will resemble the

original video they were composed of sufficiently, to allow

the human mind to grasp the content despite the

distortion of the image that ultimately takes place

during the process.

One company, Fractal Geometries, in the mid 90's,

teamed up with what today is known as Real Networks,

to adapt this technology so as to create video that

could play back along a single 33.6 to 56K bit per

second dial up stream, when such were popular on the

Internet, and DSL and Cable were not yet caught on.

While the playback was not very high quality when

viewed at the low speeds of a 56K or slower modem

line, combining it with progressive scan compression and motion

detection compression, a realistic representation of an original

60 FPS NTSV Television Video was able to be

transmitted at approximately 53KBPS to a user of

Real Player, admittedly at about half speed and poor

quality (but not much worse than early TV back in

the 30's and 40's).

Early Bell Laboratories studies were

able to transmit video at 1MHz across three pairs of

copper wire, however it required over 3 seconds to

accumulate a single still frame at a time. Later

studies in the Video Compression Lab at AT&T Bell Labs

in Crawford Corners, NJ, found that using two 56K (128KBPS) Digital ISDN

lines, a video signal could be sent at 5-12.5 frames

per second, using the early Bell Picturephone type

device as a

source as well as other video devices and advanced

data compression technology. Not having this 128K

Digital throughput in

the average 48-53KBPS Trellis Coded LZW compressed Dial

Up telephone line to the Internet, the adaptation of fractal

compression (which searches for similarities within

video images) made it possible to watch a somewhat

distorted image at as low as 28KBPS, leading to eventual adoption of

video as a medium that could be watched across the

Internet, even using slow modem driven lines.

The presentation below, a lecture by Benoit Mandelbrot

at MIT, uses an adaptation of Mendelbrot's Fractals to

display MPEG-2 Video, particularly visible in the Low

Speed 56K Transmission link.

It was this breakthrough which led

Real Networks into the marketplace with a commercially

viable player for low speed line use when viewing

Television, in the mid 90's. Thus, the competition to dominate the Desktop

Internet Video Marketplace was born, in a

competition between Real Player, Microsoft Media

Player, Apple Quicktime Player and

various other companies.

Discovering this ability in Fractals and incorporating the

method into a

compression algorithm, gave Real an early lead by

enabling snail like dial up telephone lines to play

back digital video which ordinarily might require

440KBPS or faster signals.. But, as in all things,

Mandelbrot's discovery was not purely an invention, just an

observation based on the prior work of someone in

the uses of fractal

mathematics. That exercise, of all things, was

strongly brought to bear by Code Breakers trying

to decode messages, used by the early Decoders group

at the famed Cryptology Lab at Bletchley Park in England during WWII.

Scientists who migrated to America from Germany

after the war who came to work for IBM, stumped by

how Allies had managed to decode German radio

transmissions successfully, leading to Nazi

Germany's defeat, had commissioned studies at IBM in

its Yorktown Heights research center to examine

various methods for encoding, decoding, encrypting

and decrypting information. Byproduct to that

secret study, Benoit Mandelbrot stumbled on to the

use of certain equations such as the series invented by

Gaston Julia, called the "Julia Set" which is based

on the form: z^2 + c, where c is a

complex constant with real and imaginary numbers

in representing shapes. This, after lengthy

experimentation, led to the mathematical exposure of

Fractals at IBM for the very first time since same

was first explored by the Code Breakers in the 1940's.

This occurred decades after it had surfaced in

England for use examining German radio transmissions

looking for details that would escape normal

filtering methods, using the famed British COLOSSUS

computers, invented by Sir Thomas Flowers, the

inventor of the Programmable Electronic Computer.

It is ironic that some have suggested that those who

commissioned the study at IBM were trying to figure

out how it was all done, since methods like that don't

become obsolete, particularly if kept a secret long

enough.

Ironic because during WWII, IBM

was, covertly, Nazi Germany's primary provider of

computation and tabulation technology. Germany was

defeated, in part, by the discoveries made by the

British Mathematicians and Codebreakers at Bletchley

Park and the Colossus Computers invented by Sir

Thomas Flowers that made it possible to perform such

calculations thousands of times faster than the

human mind was able. While the discovery of Fractals

helped illuminate one of the many angles that led to

Nazi Germany's defeat, science today still hasn't

figured out the vast majority of the secrets, which

have been kept a secret since 1941. Some have

breathed a sigh of relief that IBM has not made much

progress in that area.

Was IBM's Mandelbrot the inventor of Fractals?

Answer: No, not really.

No, not exactly. British cartographers

trying to map the English Coastline, noted in early

King's history, that there was a peculiar phenomenon

that occurred as they tried to create more and more

detailed maps for the King. As they charted

the coastline, they noted in their records that the

closer in they zoomed to include more and more

details in minutia on larger maps of each coastal

segment, when they measured the length of the

British coast, it got longer and longer, as a result

of the inclusion of more irregular details.

This phenomenon was unknown to mankind, and became

the object of many mathematical theories of the era.

For, how could the coastline some 5000+ miles long,

measure 8000 miles or even longer, the more they

zoomed in on it?

Unknowingly, British

Mathematicians had discovered one of the key

aspects of a fractal: the more you zoom in on its

detail, the more details emerge. This is

unlike the average photograph, which tends

to loose detail as one zooms in on it, due to its

fixed resolution nature. You can see

an example of this loss of detail by using any of

the Aerial Photo View Mapping systems on the

Internet, that let you zoom in on any address.

The closer you get, the less detail, unlike in the

case of a Fractal, and unlike in the case of the

King's cartographers and their own notation

regarding the byproduct of more detailed, larger

maps.

Later on, the French

mathematician Gaston Julia discovered even more

characteristics regarding what have since become

known as Fractal Mathematical Polynomial Equation

Sets (or Julia and Mandelbrot Sets). He wondered

what a complex polynomial function would look like

visually,

such as the ones named after him (z^2 + c, where c

is a complex constant with real and imaginary

numbers) if recursed upon itself until it achieved a

certain level, if colored and plotted in space.

His idea translated as follows.

One takes the x and y coordinates of a point in

space, and assign them to z as in x + y*i, where i

is the square root of negative one (a non-real

number). Then: squaring

this number, and add c as a constant. Then

insert the resulting pair of real and imaginary

numbers recursively back into z, performing the

whole equation over and over, again and again.

If you keep doing that until the result is greater

than a certain magnitude number: the number of times

one must execute the formula to get it to leave it's

own "orbit" can be assigned a color. Turn the

point (x, y) that was calculated that assigned color,

unless the resulting coordinates are not yet out of

their own orbit, in which case make it black. The

results: a very visually interesting shape which, as

you expand it, displays more and more details that

resemble the original shape.

Mandelbrot studied Julia Sets and

notes of the King's Cartographers, as a byproduct of

research that was being driven by notes that were

released when Britain declassified Project MK-Ultra,

at Bletchley Park, in the mid 70's, and even though

only scant bits of information were released to the

public, these two led Mandelbrot to discover the

very interesting effects of Fractals, later more

fully exemplified by Mathematicians and Computer

Graphics Artists all around the world.

For information about Mandelbrot, kindly watch this 1

hour 20 minute lecture:

220K/Second Broadband version:

BENOIT MANDELBROT Lecturing on Self Similarity and

Fractals and Science, Engineering and Finance (roughness

and beauty) at MITWORLD in November 2001

56K (Dial up Version):

http://mfile.akamai.com/12800/rm/mitworld.download.akamai.com/12800/mitw-mandelbrot-27nov01-56k.ram

<- Requires REAL PLAYER (which, of course, uses

Mandelbrot's discovery!)

<- Requires REAL PLAYER (which, of course, uses

Mandelbrot's discovery!)

download Real by clicking on

the link above. You will need

to register with REAL NETWORKS

Examples of Fractals.

The Julia Set. Gaston Julia created a very

interesting phenomenon with his unique recursive

polynomial evaluation of real and imaginary numbers.

Here is an interesting video zoom in on a Julia Set,

which continues to expose similarity details as it

drills down inside of the visual.

CLICK HERE TO

VIEW IT >

Spanky. Noel Griffin, on the Canadian

Laboratory of Particle Physics has collected an

enormous library of Fractal Related Images and

trivia.

CLICK HERE TO GO THERE

>

DataCompression. DataCompression has compiled a study

of Fractals and their relationship to different

disciplines in compression of data streams in computer

science.

CLICK HERE TO GO THERE

>

SummerSoft's Lavendar-C - Data Encryption using

Fractal Mathematics

CLICK

HERE TO GO THERE >

Fractal Merkel Trees as apply to Data Encryption from "RSAC"

CLICK HERE TO GO THERE

>

Fractals and Physics by Volkhard Normeier

CLICK HERE TO GO THERE

>

Fractals used in Video Compression

Acceleration.

Subject: [77] Introduction to Fractal

compression (long)

SOURCE:

http://www.faqs.org/faqs/compression-faq/part2/

Written by John Kominek <kominek@links.uwaterloo.ca>

Seven things you should know about

Fractal Image Compression

(assuming that you want to know about it).

1. It is a promising new technology, arguably superior to JPEG --

but only with an argument.

2. It is a lossy compression method.

3. The fractals in Fractal Image Compression are Iterated Function

Systems.

4. It is a form of Vector Quantization, one that employs a virtual

codebook.

5. Resolution enhancement is a powerful feature but is not some

magical way of achieving 1000:1 compression.

6. Compression is slow, decompression is fast.

7. The technology is patented.

That's the scoop in condensed form. Now to elaborate,

beginning with a little

background.

A Brief History of Fractal Image Compression

-----------------------------------------------

The birth of fractal geometry (or rebirth, rather) is

usually traced to IBM

mathematician Benoit B. Mandelbrot and the 1977

publication of his seminal

book The Fractal Geometry of Nature. The book put forth

a powerful thesis:

traditional geometry with its straight lines and smooth

surfaces does not

resemble the geometry of trees and clouds and mountains.

Fractal geometry,

with its convoluted coastlines and detail ad infinitum,

does.

This insight opened vast possibilities. Computer

scientists, for one, found a

mathematics capable of generating artificial and yet

realistic looking landscapes,

and the trees that sprout from the soil. And

mathematicians had at

their disposal a new world of geometric entities.

It was not long before mathematicians asked if there was

a unity among this

diversity. There is, as John Hutchinson demonstrated in

1981, it is the branch

of mathematics now known as Iterated Function Theory.

Later in the decade

Michael Barnsley, a leading researcher from Georgia

Tech, wrote the popular

book Fractals Everywhere. The book presents the

mathematics of Iterated Functions

Systems (IFS), and proves a result known as the Collage

Theorem. The

Collage Theorem states what an Iterated Function System

must be like in order

to represent an image.

This presented an intriguing possibility. If, in the

forward direction, fractal

mathematics is good for generating natural looking

images, then, in the

reverse direction, could it not serve to compress

images? Going from a given

image to an Iterated Function System that can generate

the original (or at

least closely resemble it), is known as the inverse

problem. This problem

remains unsolved.

Barnsley, however, armed with his Collage Theorem,

thought he had it solved.

He applied for and was granted a software patent and

left academia to found

Iterated Systems Incorporated (US patent 4,941,193. Alan

Sloan is the co-

grantee of the patent and co-founder of Iterated

Systems.) Barnsley announced

his success to the world in the January 1988 issue of

BYTE magazine. This

article did not address the inverse problem but it did

exhibit several images

purportedly compressed in excess of 10,000:1. Alas, it

was not a breakthrough.

The images were given suggestive names such as "Black

Forest" and "Monterey

Coast" and "Bolivian Girl" but they were all manually

constructed. Barnsley's

patent has come to be derisively referred to as the

"graduate student algorithm."

Graduate Student Algorithm

o Acquire a graduate student.

o Give the student a picture.

o And a room with a graphics workstation.

o Lock the door.

o Wait until the student has reverse engineered the picture.

o Open the door.

Attempts to automate this process have met little

success. As Barnsley admitted

in 1988: "Complex color images require about 100 hours

each to encode and

30 minutes to decode on the Masscomp [dual processor

workstation]." That's 100

hours with a _person_ guiding the process.

Ironically, it was one of Barnsley's PhD students that

made the graduate

student algorithm obsolete. In March 1988, according to

Barnsley, he arrived

at a modified scheme for representing images called

Partitioned Iterated

Function Systems (PIFS). Barnsley applied for and was

granted a second patent

on an algorithm that can automatically convert an image

into a Partitioned

Iterated Function System, compressing the image in the

process. (US patent

5,065,447. Granted on Nov. 12 1991.) For his PhD thesis,

Arnaud Jacquin imple-

mented the algorithm in software, a description of which

appears in his land-

mark paper "Image Coding Based on a Fractal Theory of

Iterated Contractive

Image Transformations." The algorithm was not

sophisticated, and not speedy,

but it was fully automatic. This came at price: gone was

the promise of

10,000:1 compression. A 24-bit color image could

typically be compressed from

8:1 to 50:1 while still looking "pretty good."

Nonetheless, all contemporary

fractal image compression programs are based upon

Jacquin's paper.

That is not to say there are many fractal compression

programs available.

There are not. Iterated Systems sell the only commercial

compressor/decompressor,

an MS-Windows program called "Images Incorporated."

There are also an

increasing number of academic programs being made freely

available. Unfortunately,

these programs are -- how should I put it? -- of merely

academic quality.

This scarcity has much to do with Iterated Systems'

tight lipped policy about

their compression technology. They do, however, sell a

Windows DLL for programmers.

In conjunction with independent development by

researchers else-

where, therefore, fractal compression will gradually

become more pervasive.

Whether it becomes all-pervasive remains to be seen.

Historical Highlights:

1977 -- Benoit Mandelbrot finishes the first edition of The Fractal

Geometry of Nature.

1981 -- John Hutchinson publishes "Fractals and Self-Similarity."

1983 -- Revised edition of The Fractal Geometry of Nature is

published.

1985 -- Michael Barnsley and Stephen Demko introduce Iterated

Function Theory in

"Iterated Function Systems and the Global

Construction of

Fractals."

1987 -- Iterated Systems Incorporated is founded.

1988 -- Barnsley publishes the book Fractals Everywhere.

1990 -- Barnsley's first patent is granted.

1991 -- Barnsley's second patent is granted.

1992 -- Arnaud Jacquin publishes an article that describes the

first

practical fractal

image compression method.

1993 -- The book Fractal Image Compression by Michael Barnsley and

Lyman

Hurd is published.

-- The Iterated Systems' product line

matures.

1994 -- Put your name here.

On the Inside

--------------

The fractals that lurk within fractal image compression

are not those of the

complex plane (Mandelbrot Set, Julia sets), but of

Iterated Function Theory.

When lecturing to lay audiences, the mathematician

Heinz-Otto Peitgen introduces

the notion of Iterated Function Systems with the

alluring metaphor of a

Multiple Reduction Copying Machine. A MRCM is imagined

to be a regular copying

machine except that:

1. There are multiple lens arrangements to create multiple overlapping

copies of the original.

2. Each lens arrangement reduces the size of the original.

3. The copier operates in a feedback loop, with the output of one

stage the input to the next. The initial input may be

anything.

The first point is what makes an IFS a system. The third

is what makes it

iterative. As for the second, it is implicitly

understood that the functions

of an Iterated Function Systems are contractive.

An IFS, then, is a set of contractive transformations

that map from a defined

rectangle of the real plane to smaller portions of that

rectangle. Almost

invariably, affine transformations are used. Affine

transformations act to

translate, scale, shear, and rotate points in the plane.

Here is a simple

example:

|---------------|

|-----|

|x

|

|1 |

|

|

| |

|

|

|---------------|

|

| |2

|3 |

|

| |

| |

|---------------|

|---------------|

Before

After

Figure 1. IFS for generating Sierpinski's Triangle.

This IFS contains three component transformations (three

separate lens ar-

rangements in the MRCM metaphor). Each one shrinks the

original by a factor of

2, and then translates the result to a new location. It

may optionally scale

and shift the luminance values of the rectangle, in a

manner similar to the

contrast and brightness knobs on a TV.

The amazing property of an IFS is that when the set is

evaluated by iteration,

(i.e. when the copy machine is run), a unique image

emerges. This latent image

is called the fixed point or attractor of the IFS. As

guaranteed by a result

known as the Contraction Theorem, it is completely

independent of the initial

image. Two famous examples are Sierpinski's Triangle and

Barnsley's Fern.

Because these IFSs are contractive, self-similar detail

is created at every

resolution down to the infinitesimal. That is why the

images are fractal.

The promise of using fractals for image encoding rests

on two suppositions: 1.

many natural scenes possess this detail within detail

structure (e.g. clouds),

and 2. an IFS can be found that generates a close

approximation of a scene

using only a few transformations. Barnsley's fern, for

example, needs but

four. Because only a few numbers are required to

describe each transformation,

an image can be represented very compactly. Given an

image to encode, finding

the optimal IFS from all those possible is known as the

inverse problem.

The inverse problem -- as mentioned above -- remains

unsolved. Even if it

were, it may be to no avail. Everyday scenes are very

diverse in subject

matter; on whole, they do not obey fractal geometry.

Real ferns do not branch

down to infinity. They are distorted, discolored,

perforated and torn. And the

ground on which they grow looks very much different.

To capture the diversity of real images, then,

Partitioned IFSs are employed.

In a PIFS, the transformations do not map from the whole

image to the parts,

but from larger parts to smaller parts. An image may

vary qualitatively from

one area to the next (e.g. clouds then sky then clouds

again). A PIFS relates

those areas of the original image that are similar in

appearance. Using Jac-

quin's notation, the big areas are called domain blocks

and the small areas

are called range blocks. It is necessary that every

pixel of the original

image belong to (at least) one range block. The pattern

of range blocks is

called the partitioning of an image.

Because this system of mappings is still contractive,

when iterated it will

quickly converge to its latent fixed point image.

Constructing a PIFS amounts

to pairing each range block to the domain block that it

most closely resembles

under some to-be-determined affine transformation. Done

properly, the PIFS

encoding of an image will be much smaller than the

original, while still

resembling it closely.

Therefore, a fractal compressed image is an encoding

that describes:

1. The grid partitioning (the range blocks).

2. The affine transforms (one per range block).

The decompression process begins with a flat gray

background. Then the set of

transformations is repeatedly applied. After about four

iterations the attractor

stabilizes. The result will not (usually) be an exact

replica of the original, but reasonably close.

Scalelessness and Resolution Enhancement

-------------------------------------------

When an image is captured by an acquisition device, such

as a camera or scanner,

it acquires a scale determined by the sampling

resolution of that device.

If software is used to zoom in on the image, beyond a

certain point you don't

see additional detail, just bigger pixels.

A fractal image is different. Because the affine

transformations are spatially

contractive, detail is created at finer and finer

resolutions with each iteration.

In the limit, self-similar detail is created at all

levels of resolution,

down the infinitesimal. Because there is no level that

'bottoms out'

fractal images are considered to be scale-less.

What this means in practice is that as you zoom in on a

fractal image, it will

still look 'as it should' without the staircase effect

of pixel replication.

The significance of this is cause of some misconception,

so here is the right

spot for a public service announcement.

/--- READER BEWARE ---\

Iterated Systems is fond of the following argument. Take

a portrait that is,

let us say, a grayscale image 250x250 pixels in size, 1

byte per pixel. You

run it through their software and get a 2500 byte file

(compression ratio =

25:1). Now zoom in on the person's hair at 4x

magnification. What do you see?

A texture that still looks like hair. Well then, it's as

if you had an image

1000x1000 pixels in size. So your _effective_

compression ratio is 25x16=400.

But there is a catch. Detail has not been retained, but

generated. With a

little luck it will look as it should, but don't count

on it. Zooming in on a

person's face will not reveal the pores.

Objectively, what fractal image compression offers is an

advanced form of

interpolation. This is a useful and attractive property.

Useful to graphic

artists, for example, or for printing on a high

resolution device. But it does

not bestow fantastically high compression ratios.

\--- READER BEWARE ---/

That said, what is resolution enhancement? It is the

process of compressing an

image, expanding it to a higher resolution, saving it,

then discarding the

iterated function system. In other words, the compressed

fractal image is the

means to an end, not the end itself.

The Speed Problem

---------------------

The essence of the compression process is the pairing of

each range block to a

domain block such that the difference between the two,

under an affine trans-

formation, is minimal. This involves a lot of searching.

In fact, there is nothing that says the blocks have to

be squares or even

rectangles. That is just an imposition made to keep the

problem tractable.

More generally, the method of finding a good PIFS for

any given image involves

five main issues:

1. Partitioning the image into range blocks.

2. Forming the set of domain blocks.

3. Choosing type of transformations that will be considered.

4. Selecting a distance metric between blocks.

5. Specifying a method for pairing range blocks to domain blocks.

Many possibilities exist for each of these. The choices

that Jacquin offered

in his paper are:

1. A two-level regular square grid with 8x8 pixels for the large

range blocks and 4x4 for the small ones.

2. Domain blocks are 16x16 and 8x8 pixels in size with a

sub-sampling

step size of four. The 8 isometric symmetries

(four rotations,

four mirror flips) expand the domain pool to a

virtual domain

pool eight times larger.

3. The choices in the last point imply a shrinkage by two in each

direction, with a possible rotation or flip, and

then a translation

in the image plane.

4. Mean squared error is used.

5. The blocks are categorized as of type smooth, midrange, simple

edge, and complex edge. For a given range block

the respective

category is searched for the best match.

The importance of categorization can be seen by

calculating the size of the

total domain pool. Suppose the image is partitioned into

4x4 range blocks. A

256x256 image contains a total of (256-8+1)^2 =

62,001 different 8x8 domain

blocks. Including the 8 isometric symmetries increases

this total to 496,008.

There are (256-4+1)^2 = 64,009 4x4 range blocks, which

makes for a maximum of

31,748,976,072 possible pairings to test. Even on a fast

workstation an exhaustive

search is prohibitively slow. You can start the program

before departing

work Friday afternoon; Monday morning, it will still be

churning away.

Increasing the search speed is the main challenge facing

fractal image compression.

Similarity to Vector Quantization

---------------------------------

To the VQ community, a "vector" is a small rectangular

block of pixels. The

premise of vector quantization is that some patterns

occur much more frequently

than others. So the clever idea is to store only a few

of these common

patterns in a separate file called the codebook. Some

codebook vectors are

flat, some are sloping, some contain tight texture, some

sharp edges, and so

on -- there is a whole corpus on how to construct a

codebook. Each codebook

entry (each domain block) is assigned an index number. A

given image, then, is

partitioned into a regular grid array. Each grid element

(each range block) is

represented by an index into the codebook. Decompressing

a VQ file involves

assembling an image out of the codebook entries. Brick

by brick, so to speak.

The similarity to fractal image compression is apparent,

with some notable

differences.

1. In VQ the range blocks and domain blocks are the same size; in

an

IFS the domain blocks are always larger.

2. In VQ the domain blocks are copied straight; in an IFS each

domain

block undergoes a luminance scaling and offset.

3. In VQ the codebook is stored apart from the image being coded;

in

an IFS the codebook is not explicitly stored. It

is comprised of

portions of the attractor as it emerges during

iteration. For that

reason it is called a "virtual codebook." It has

no existence

independent of the affine transformations that

define an IFS.

4. In VQ the codebook is shared among many images; in an IFS the

virtual codebook is specific to each image.

There is a more refined version of VQ called gain-shape

vector quantization in

which a luminance scaling and offset is also allowed.

This makes the similari-

ty to fractal image compression as close as can be.

Compression Ratios

---------------------

Exaggerated claims not withstanding, compression ratios

typically range from

4:1 to 100:1. All other things equal, color images can

be compressed to a

greater extent than grayscale images.

The size of a fractal image file is largely determined

by the number of trans-

formations of the PIFS. For the sake of simplicity, and

for the sake of comparison

to JPEG, assume that a 256x256x8 image is partitioned

into a regular

partitioning of 8x8 blocks. There are 1024 range blocks

and thus 1024 trans-

formations to store. How many bits are required for

each?

In most implementations the domain blocks are twice the

size of the range

blocks. So the spatial contraction is constant and can

be hard coded into the

decompression program. What needs to be stored are:

x position of domain block

8 6

y position of domain block

8 6

luminance scaling

8 5

luminance offset

8 6

symmetry indicator

3 3

-- --

35 26 bits

In the first scheme, a byte is allocated to each number

except for the symmetry

indicator. The upper bound on the compression ratio is

thus (8x8x8)/35 =

14.63. In the second scheme, domain blocks are

restricted to coordinates

modulo 4. Plus, experiments have revealed that 5 bits

per scale factor and 6

bits per offset still give good visual results. So the

compression ratio limit

is now 19.69. Respectable but not outstanding.

There are other, more complicated, schemes to reduce the

bit rate further. The

most common is to use a three or four level quad tree

structure for the range

partitioning. That way, smooth areas can be represented

with large range

blocks (high compression), while smaller blocks are used

as necessary to

capture the details. In addition, entropy coding can be

applied as a back-end

step to gain an extra 20% or so.

Quality: Fractal vs. JPEG

-------------------------

The greatest irony of the coding community is that great

pains are taken to

precisely measure and quantify the error present in a

compressed image, and

great effort is expended toward minimizing an error

measure that most often is

-- let us be gentle -- of dubious value. These measure

include signal-to-noise

ratio, root mean square error, and mean absolute error.

A simple example is

systematic shift: add a value of 10 to every pixel.

Standard error measures

indicate a large distortion, but the image has merely

been brightened.

With respect to those dubious error measures, and at the

risk of over-simplification,

the results of tests reveal the following: for low

compression

ratios JPEG is better, for high compression ratios

fractal encoding is better.

The crossover point varies but is often around 40:1.

This figure bodes well

for JPEG since beyond the crossover point images are so

severely distorted

that they are seldom worth using.

Proponents of fractal compression counter that

signal-to-noise is not a good

error measure and that the distortions present are much

more 'natural looking'

than the blockiness of JPEG, at both low and high bit

rates. This is a valid

point but is by no means universally accepted.

What the coding community desperately needs is an easy

to compute error measure

that accurately captures subjective impression of human

viewers. Until then, your

eyes are the best judge.

The Chairman's Conclusion about

Fractals

By Dr. Jack A. Shulman

In 1979, part way

through the development of a flight control

supercomputer system later used by the Department of

Defense in a classified bomber application, I was heard

to remark that I'd adapted recursive similarities since

1975, to create a self expanding system of adaptive

supercomputers, which could subdivide the control of

flight elements and assign them to iterative self

similar subcomponents, creating a Geodesic like array of

parallel computers within larger arrays of parallel

computers within larger arrays of parallel computers.

It wasn't until Benoit Mandelbrot published on Fractals,

that I realized that neuro-computing and the associative

structure of the human brain was comprised of logic

within logic arranged not unlike my supercomputers, in

an array of Cognition, Abstraction, Inference and

Induction micro engines, in essence, neuro-ganglial

pills all arranged to create circumlocution and form a

network of knowledge which had associations within it

that were the same as larger associations in its outer

layers that had significant self similarities contained

iteratively within them to a very atomic degree. The

parallels with Mandelbrot's Fractals in 1977 amazed me.

I marveled at the mind's immediate cognitive ability to

instantly recognize those similarities in the wondrous

spires of Fractal that the neo-Mandelbrotists were

producing on computer graphics screens that zoomed in on

the details within details within details endlessly.

While the Mind is

perhaps not Fractal-ic per say, Fractals simultaneously

trigger the Cognitive function of Similarity Recognition

and Comparison in a unique, creating an almost physical

stimulus regarding "beauty and roughness" to quote

Mandelbrot. The notion of iterative self

similarity Mandelbrot frequently refers to (clearly, he

is fascinated with the notion) does seem to apply as "a

way to explain" the means that Sleep uses to recompose

the short term memories into long term memories,

holographically sweeping clean redundant copies of

similar logic, and replacing them with references to

longer term individual copies of the basic logic that

applies, with weights and measurements added for good

measure. Even then, Sleep's compression algorithm is

also not Fractal-ic.

Nonetheless,

Fractals have turned out to be a key to understanding

the Cognitive functions of the mind, and even to study

the patterns of correct and incorrect logical

abstraction and inference, found within it, for many

similarities within similarities demonstrate how the

recursive nature of Human Association often is

sufficiently vague or sufficiently weak, to simply allow

us to draw the wrong conclusions.

Nonetheless, the mere study of Fractals, itself, is mind

opening, particularly when considering how to create

images of infinite self-definition, a problem such as

viewing the surface of the Earth from space. One

may be able to see the shoreline, but can one see the

baby horseshoe sand crabs scampering across by zooming

in on the image produced by Aerial photography today?

I have a very

strong belief that eventually, humanity will learn how

to collect nearly infinite detail using sensor based

technology, that science will progress to such a degree

that eventually when we zoom in on a video image from

Space, we will eventually be able to see not only the

shore and the crabs, but the individual grains of sand

and even the atomic structure within a single one.

And I am also of the belief that in order to store this

myriad of imagery in holographic form, we are going to

have to borrow heavily from the exceptional work of

Benoit Mandelbrot, and that which he has inspired in

others. For in my vision of the eventual nearly infinite

resolution of some future orbital camera, and its

metaphor looking OUT into the Universe through some form

of successor to Hubble (or perhaps an upgraded Hubble ST

itself?) we are talking about having to store an

enormous amount of information and then, all of us will

be thankful to Benoit Mandelbrot once again!

- Dr. Jack A. Shulman, Chairman,

ACSA

The above aggregation of

information, pictures, and incorporated references is

called an "Articulum Mosaica". It was compiled by

student interns at the ACSA, under the supervision of

the office of the Chairperson who had created the

original compilation. No commercial purpose was

intended for its publication, it is published here

strictly for non-profit educational reasons.

Disclaimer:

The information on this website is presented for

educational purposes only. Information has

been obtained from qualified sources who have based

their assessments on the information provided them

that could be confirmed. Any inaccuracies or

incorrect assertions are wholly disclaimed and

unintentional.